SGLang v0.3

❖ We’re excited to announce the release of SGLang v0.3, which brings significant performance enhancements and expanded support for novel model architectures. Here are the key updates: Up to 7x higher throughput for DeepSeek Multi-head Latent Attention (MLA). Up to 1.5x lower latency with torch.compile on small batch sizes. Support for interleaved text and multi-image/video in LLaVA-OneVision. Support for interleaved window attention and 2x longer context length in Gemma-2.

SGLang v0.2

❖ Through our operational experiences and in-depth research, we’ve continuously enhanced the underlying serving systems for Chatbot Arena, spanning from the high-level multi-model serving framework, FastChat, to the efficient serving engine for LLMs and VLMs, SGLang Runtime (SRT). While existing options like TensorRT-LLM, vLLM, and Hugging Face TGI have their merits, we found them sometimes hard to use, difficult to customize, or lacking in performance. This motivated us to create a serving engine that is not only user-friendly and easily modifiable but also delivers top-tier performance.

FlashAttention-3

❖ Attention, as a core layer of the ubiquitous Transformer architecture, is a bottleneck for large language models and long-context applications. FlashAttention (and FlashAttention-2) pioneered an approach to speed up attention on GPUs by minimizing memory reads/writes, and is now used by most libraries to accelerate Transformer training and inference. This has contributed to a massive increase in LLM context length in the last two years, from 2-4K (GPT-3, OPT) to 128K (GPT-4), or even 1M (Llama 3).

SGLang

❖ Large Language Models (LLMs) are increasingly utilized for complex tasks that require multiple chained generation calls, advanced prompting techniques, control flow, and interaction with external environments. However, there is a notable deficiency in efficient systems for programming and executing these applications. To address this gap, we introduce SGLang, a Structured Generation Language for LLMs. SGLang enhances interactions with LLMs, making them faster and more controllable by co-designing the backend runtime system and the frontend languages.

Accelerating Generative AI with PyTorch

❖ Over the past year, generative AI use cases have exploded in popularity. Text generation has been one particularly popular area, with lots of innovation among open-source projects such as llama.cpp, vLLM, and MLC-LLM. While these projects are performant, they often come with tradeoffs in ease of use, such as requiring model conversion to specific formats or building and shipping new dependencies. This begs the question: how fast can we run transformer inference with only pure, native PyTorch?

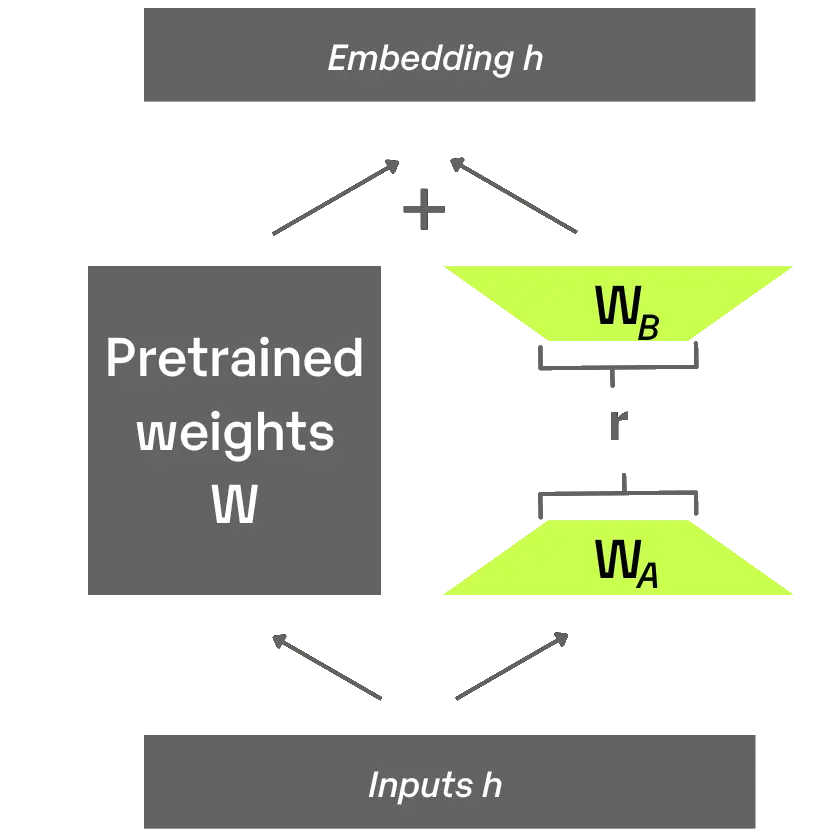

S-LoRA

❖ We introduce S-LoRA (code), a system designed for the scalable serving of many LoRA adapters. S-LoRA adopts the idea of Unified Paging for KV cache and adapter weights to reduce memory fragmentation. Heterogeneous Batching of LoRA computation with different ranks leveraging optimized custom CUDA kernels which are aligned with the memory pool design. S-LoRA TP to ensure effective parallelization across multiple GPUs, incurring minimal communication cost for the added LoRA computation compared to that of the base model.

DeepSpeed-FastGen

❖ Large language models (LLMs) like GPT-4 and LLaMA have emerged as a dominant workload in serving a wide range of applications infused with AI at every level. While frameworks like DeepSpeed, PyTorch, and several others can regularly achieve good hardware utilization during LLM training, the interactive nature of these applications and the poor arithmetic intensity of tasks like open-ended text generation have become the bottleneck for inference throughput in existing systems.

vLLM

❖ LLMs promise to fundamentally change how we use AI across all industries. However, actually serving these models is challenging and can be surprisingly slow even on expensive hardware. We are excited to introduce vLLM, an open-source library for fast LLM inference and serving. vLLM utilizes PagedAttention, our new attention algorithm that effectively manages attention keys and values. vLLM redefines the new state of the art in LLM serving: it delivers up to 24x higher throughput than HuggingFace Transformers, without requiring any model architecture changes.